How AI discriminates and re-enforces stereotypes on platforms like Tik Tok, Twitter, and FACEBOOK.

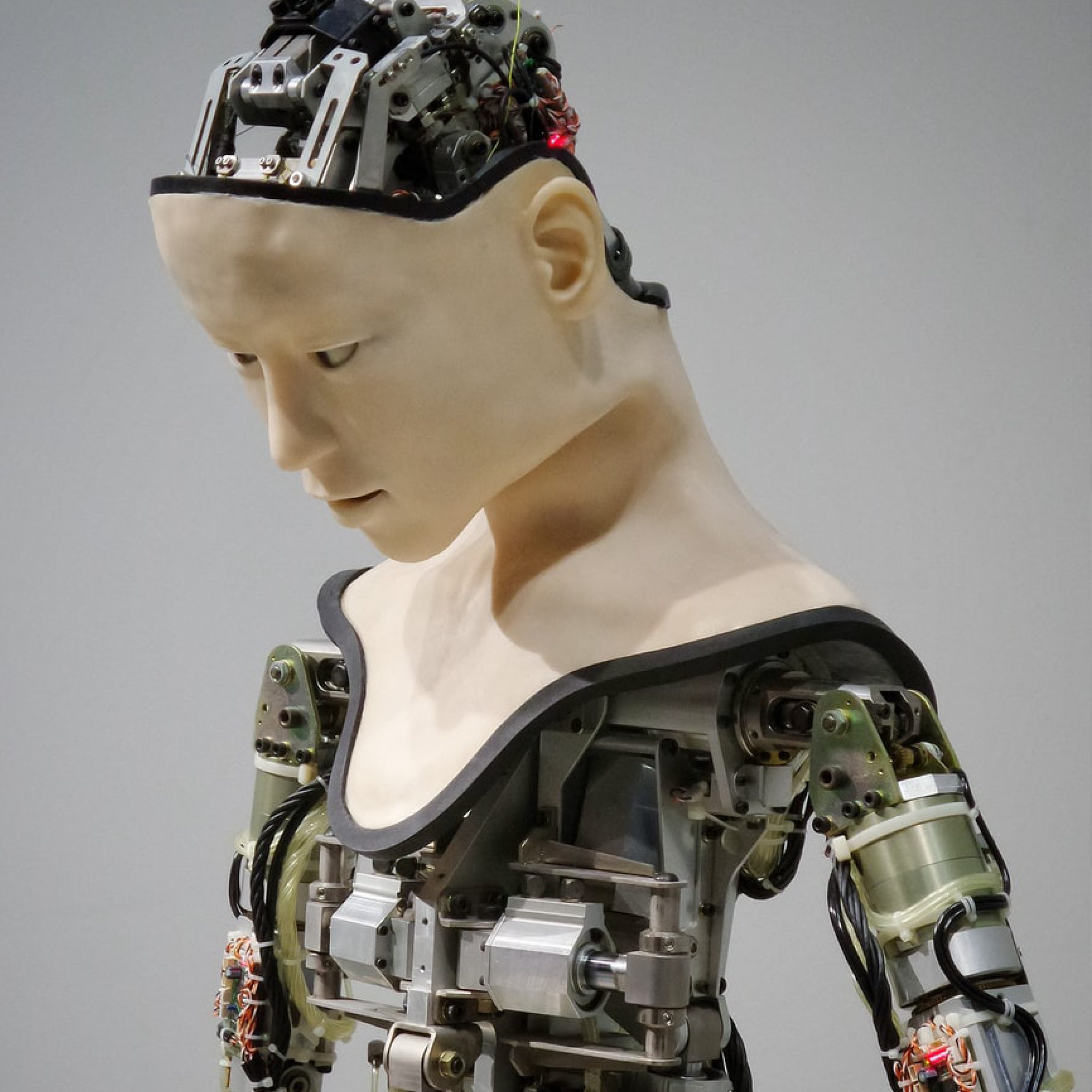

Now more than ever, technological advancement is bringing revolutionary solutions to global issues. With all of the positivity that surrounds new historical feats of machines, it’s also essential that we bear in mind the nuances of these same advancements that harm rather than help.

Machine learning bias is when artificial intelligence (AI) begins to present results of systemic prejudice (such as demographic, cultural, and aesthetic bias).

Under the umbrella of AI is machine learning, the process of utilizing training data sets to “teach” machines their predictive functions. Ultimately, the root of this issue is human error; incomplete datasets result in inaccurate predictions. Without a wide range of representation for the diverse groups worldwide that utilize the internet, digital products are subject to reflect unintended cognitive biases that single out specific cultures, identities, etc.

In 2020, Twitter users voiced concerns regarding the predictive system used to measure eye movement (saliency) when cropping images. The algorithm applied did not serve all people equally. Shortly after the uproar, Twitter published an “algorithmic bias assessment,” which confirmed that the model was not treating all users fairly. The following year they rolled out changes regarding how images appear on the platform.

Research shows that the algorithm (used to edit and crop photos automatically) discriminated unfairly against disabled users, older users, and Muslims. The AI has learned through algorithmic processing to ignore individuals on the platform with white/gray hair, headscarves, and wheelchairs. The findings were discovered as a part of Twitter’s first initiative hosted at the Def Con hacker conference in Las Vegas, a contest whereby researchers and programmers were invited to analyze the algorithmic code and prove that the image-cropping algorithm was biased against particular groups of people. The company gave out cash prizes of up to $3,500 to the winner and runners-up.

Of all social media giants, Twitter has been the first to address the social concerns that have arisen with the progression of social media algorithm design. They realize that this is not an issue they will be able to tackle alone and have enlisted community enthusiasts/researchers to help address these concerns. The winning submission of Twitter’s “algorithmic bias challenge” used a theoretical approach demonstrating that the algorithmic model tends to prioritize users with stereotypes of western beauty standards, including a selective preference for younger, feminine, lighter-skinned faces that are considered slim.

Similar gripes translate to the photo-sharing platform Instagram, which uses a similar algorithmic model for moderating content. Users have shared concerns regarding the takedown of posts and accounts of activists in countries such as Crimea, Western Sahara, Kashmir, and territories within Palestine.

Posts removed through “user-reporting” mechanisms can be just as restrictive. On the platform, community standards are enforced by users of the platform. The posts that these users flag are then reviewed by content moderators, who then determine whether or not a violation has occurred. If a violation occurs, the content is removed, and repeat infringements can cause a user to be temporarily suspended or permanently banned.

During the height of the pandemic, many social media companies had to rely more on artificial intelligence in place of human moderators. More than ever, AI is now responsible for making decisions regarding what users are and cannot post on platforms. The pandemic has caused many technology-based companies to address staffing issues by replacing employee processes with automation.

In one computational linguistics study, researchers discovered that tweets written in African American Vernacular English (AAVE) were twice as likely to be flagged as offensive. The platform seems to suffer from a progressive racial bias against minority speech and representation.

TikTok describes a portion of its recommendation algorithm as “collaborative filtering,” where recommended content is personalized based on user interests. The problem with this is that the biases present in society are reflected in user behavior, then re-enforced through the algorithm.

On the platform’s ‘Creator Marketplace,’ black creators have to fight for inclusion and credit constantly. Terms such as “black success” and “Black Lives Matter” were purportedly suppressed in search results. Black dancers who create global dance trends on the web have been affected so negatively by the system that many called for a content strike in June last year. “Our TikTok Creator Marketplace protections, which flag phrases typically associated with hate speech, were erroneously set to flag phrases without respect to word order. We recognize and apologize for how frustrating this was to experience, and our team has fixed this significant error,” replied a TikTok spokesperson in a statement to NBC News.

Users suggested to you on the platform will typically match the same race, age, or facial characteristics as the ones you already follow, as your feed is programmed to present content similar to what you have previously interacted with. The downside is that users don’t see people or opinions that differ from their own or themselves, creating an echo chamber.

These social media platforms rely heavily on user data to operate effectively. That same user data has become a commodity in a way that was previously unprecedented. With this problem comes a social responsibility by large technology companies to obtain and utilize analytic data ethically. In the coming years, digital diversity will be just as crucial as its real-world counterpart due to systemic biases of translation from human to machine.